Cutting the carbon out of concrete

The Radical Ceramic Research Group is pioneering potentially transformative alternatives to traditional concrete, the world’s second largest source of emissions.

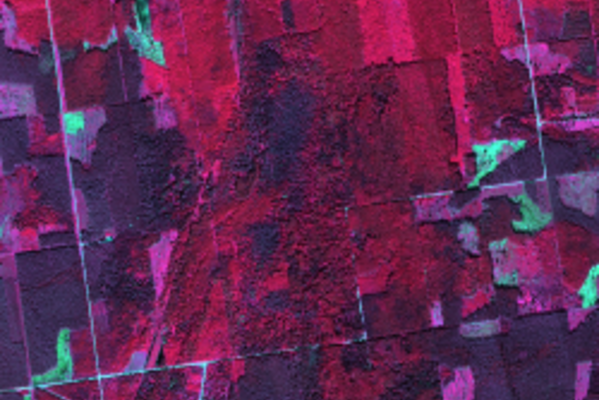

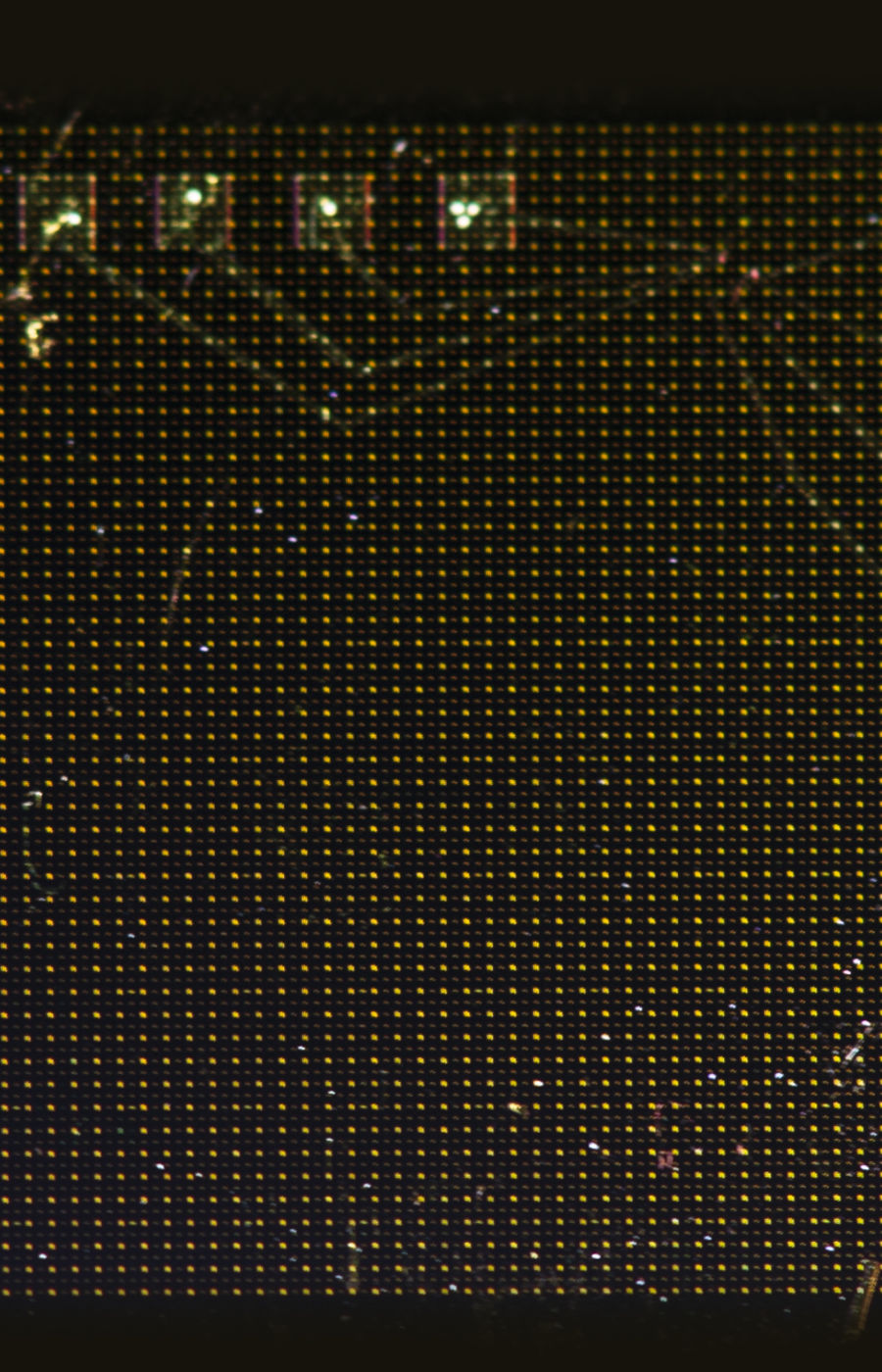

A hyperspectral snapshot captures all the light in a scene, not just colours or infrared light. The extra information is useful in many applications, from agriculture and conservation to forensics and food safety.

Text: Richard Fisher

It’s a beautiful day in early summer, and Mikael Westerlund is sitting at his cabin overlooking the Baltic Sea. Behind him are two pine trees, framed by the blue sky.

The trees, it turns out, are a helpful way to explain a smart new technology that he and several researchers at Aalto University are now seeking to exploit. It’s called hyperspectral imaging, and it relies on analysing light to reveal things about trees, plants, and agricultural crops that would otherwise be invisible.

To explain, Westerlund gestures at the pines and other evergreens surrounding him. When you look at a tree or a plant from a distance, he says, how do you know if it’s healthy or not? ‘Looking at these trees from the outside, you cannot necessarily tell with your eyes if the tree has been attacked,’ he says.

In northern Europe, foresters are reckoning with hidden invaders that infect trees: a fungus that causes rot and pests like the bark beetle are becoming more common under climate change. Such infiltrators spread from tree to tree, often hidden within the trunk and roots until it’s too late. In Finland, tens of millions of euros are lost to the damage each year.

Knowing whether or not plants are thriving is also a challenge in agriculture, Westerlund explains. He asks you to imagine you’re a farmer running a commercial greenhouse: ‘Are you using too much fertiliser or too little? Is there enough CO2 available? Do you have too much lighting or not enough? Do you have a pest problem, or are you applying too much pesticide? Are you providing enough water or not enough?’

Experienced growers can find answers by examining their plants up close, but in large-scale farming it’s necessary to monitor enormous fields of crops from a distance. Keeping one plant healthy is doable, but hundreds of thousands at once? That’s a bigger challenge.

That’s where hyperspectral imaging comes in – allowing foresters and farmers alike to monitor vegetation from a distance, revealing details that might otherwise go unnoticed.

So what is hyperspectral technology, and how does it work? To answer that, you first need to know a bit about the nature of light and how cameras see it.

When you take a picture – say, a selfie – a sensor inside your phone camera analyses the light that scatters off your face. To construct a picture, it assigns each pixel a mixture of the colours red, green and blue. This is similar to how our eyes work. But through this process, a great deal of information about the light is lost.

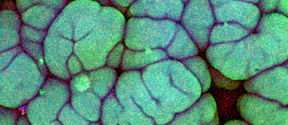

When light hits a hyperspectral camera, it is analysed into far more than three colours per pixel. The camera records the ‘spectral signature’ of the light from each point in a scene, taking in much more information about its wavelength and intensity than a standard camera and making measurements across a wider range of the electromagnetic spectrum. It sees things that even our eyes cannot.

Crucially, in the same way that a fingerprint can identify a person, the spectral signature reflected from objects can identify properties of materials that would otherwise go unseen.

How so? Well, if you took that selfie with a hyperspectral camera, you’d learn much more about your own face than how stylish you are: you’d be able to record the unique spectral fingerprint of the melanin, water and haemoglobin content of your skin.

That’s why some researchers are exploring the technology’s potential for face recognition and biometric security. But that’s certainly not the only application.

Ever since hyperspectral technology was first developed, researchers have proposed various uses. For doctors, it promises an extra tool to help them diagnose disease by, for instance, rapidly measuring oxygen saturation in tissue. For art conservationists, it enables them to peer into old paintings non-invasively. And for the police, it can rapidly reveal invisible details at crime scenes, such as gunshot residue or blood.

At Aalto University, one research team is finding that hyperspectral imaging can be an excellent tool to monitor and understand forests and peatlands – and they’re doing so by peering down at the Earth with cameras in space.

Miina Rautiainen and her colleagues in the Remote Sensing Research Team at the Department for Built Environment are turning to hyperspectral cameras mounted on satellites – and occasionally planes – to help them model the character of forests and peatlands from up high.

When combined with field observations, this remotely-collated data is helping Rautiainen’s team build enhanced computational models of the vegetation in three dimensions. In a forest, that means everything from the leaves at the treetops down to the shrubs on the forest floor – as well as how the 3D structure differs over a region.

This is helpful to know for many reasons. The team’s modelling can help predict biodiversity, as well as the productivity of the vegetation and its ability to suck up carbon from the atmosphere. ‘That’s something that’s very, very interesting at the moment in terms of trying to understand climate change,’ says Rautiainen.

The three-dimensional modelling of the forest can also predict its radiation regime – essentially, how it absorbs and reflects the sun’s radiation.‘If we want to forecast future climate scenarios, having a realistic description of the vegetation’s shape or 3D form is important,’ she says.

What makes hyperspectral cameras on satellites and planes so useful is that they can reveal things about forests and peatland that would be difficult to monitor in-person. ‘The biggest strength is that we get spatially continuous coverage of the environment,’ says Rautiainen. ‘With satellite data, we get to cover areas where we don't have observation networks or where it's politically impossible to go for fieldwork. Or sometimes it's just that the regions are so remote: there are no roads, no rivers that we could use to get there.’

Satellites also pass over regions regularly, providing continual updates on changes over time. ‘We can, for example, follow the growing season of vegetation in much more detail than what we would be able to do if we were on the ground doing some fieldwork,’ she says.

Rautiainen relishes getting out into the field – indeed, she and her team recently used laser scanners and other tools to measure the physical structures of trees and vegetation up close, and they were shadowed by photographer Sheung Yiu for a project called Ground Truth. Combining field observations with remote sensing data helps them ensure their modelling is closer to reality. Though it’s sadly not possible to spend time beneath the canopies all year round, by peering down from satellites and planes, she can at least stay connected to the forest without leaving her office.

Hyperspectral cameras do have some downsides, however. They are expensive – some cost tens of thousands of euros – and can be relatively bulky and heavy.

That’s why Westerlund and a team of scientists led by photonics professor Zhipei Sun in Aalto’s Department of Electronics and Nanoengineering are now working to commercialise a miniature hyperspectral sensor they’ve developed. It’s so small that it could one day fit inside a smartphone or a small drone flying over a crop field.

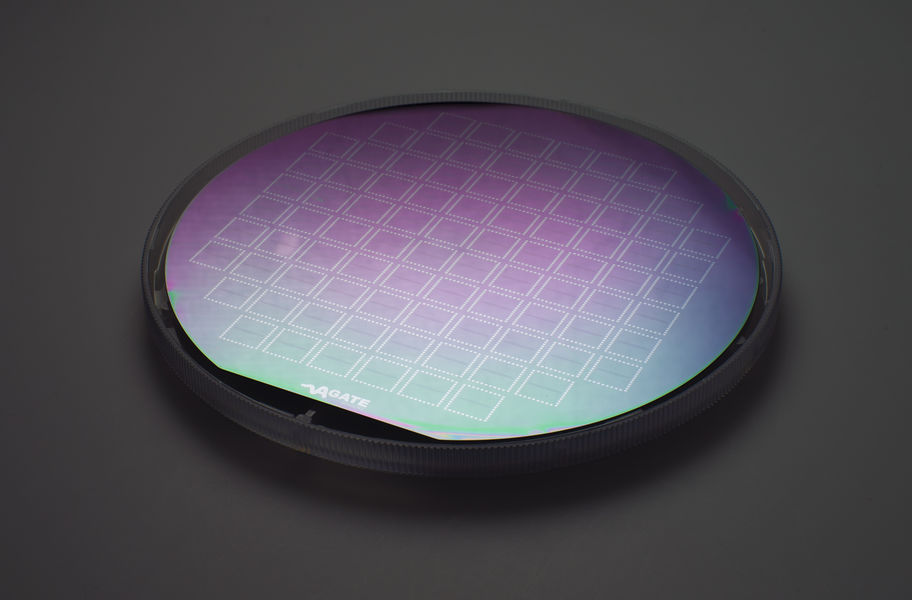

Their miniature hyperspectral technology builds on a fundamental breakthrough in material physics published in the journal Science in 2022. In short, it’s a camera on a chip – but how does it work?

Typically, to build a hyperspectral camera, ‘you need something tunable, which can resolve the light or which can sense different wavelengths of light,’ explains Faisal Ahmed, one of the scientists developing the technology.

To tune light, traditional hyperspectral cameras use bulky optical and mechanical components. ‘You need moving parts, you need gratings, you need prisms. You need to have enough space in your device for the colours to spread out and not overlap,’ explains Andreas Liapis.

With all these components, a typical commercial hyperspectral camera is often bigger than a kitchen toaster – and quite a bit heavier.

But the team found they could replace these mechanical and optical components by fiddling with the voltage across a clever arrangement of nanoscale two-dimensional layered materials, including graphene. This allows them to control the light electrically. ‘We don't need large bulky components. We just need to tune the voltage, and the rest is taken care of by an algorithm we developed. That's it,’ says Ahmed.

To commercialise the technology, the team is preparing to form a start-up company called Agate Sensors, which will spin out from Aalto in early 2024.

Down the line, they envision a whole host of applications for their tiny camera technology, from the automotive industry to security technologies. In a world where AI is rapidly growing, you can see why: smart machines increasingly need to know what they are looking at, and hyperspectral imaging could help enable that.

It’s early days because the science is so fresh, but one area that the Agate team is looking at first is agriculture, allowing farmers to monitor crops over large scales.

As Ahmed explains: ‘A portable hyperspectral camera mounted on a drone could fly over a field. Then you could easily map where nutrients are needed, where water is needed, and where pesticides are needed.’

For now the team’s next step is to refine and test their design. ‘The eventual plan is to have a proof-of-concept device that we can take into the field and demo, but that will take some time,’ says Ahmed. ‘We’re maturing the technology so it’s ready for commercialisation.’

Westerlund, who is leading the team’s business strategy, credits the team’s successes to the international experience at Aalto, with team members coming together from China, Pakistan, Greece and Finland. ‘What’s really intriguing for me is the fact that we have these very diverse backgrounds. When we talk about topics, we almost always get different views and opinions,’ he explains. ‘I think it’s really beneficial for a project like this to be able to actually view things from different angles and consider different options.’

So the next time you look at a plant or tree, consider that there’s a great deal that your eyes can’t see – but it’s all there in the light bouncing off the leaves. And unlocking those hidden secrets could, one day, be as straightforward as taking a picture with your smartphone.

_______________________________________________________________

* Photo of the bark beetle by Gilles San Martin

Read about radically creative approaches that are turning fundamental research into tools to create a better future for us all.

The Radical Ceramic Research Group is pioneering potentially transformative alternatives to traditional concrete, the world’s second largest source of emissions.

Common neurological disorders like depression and chronic pain can be challenging to treat with conventional methods. An automated version of a long-used brain stimulation technique holds real promise as a reliable and effective drug-free alternative.

Focusing on short-term profit isn’t sustainable. So what can we do to move in the right direction?

Antimicrobial resistance is causing a silent, stealthy pandemic – and the pipeline of new antibiotics is dwindling. The good news is that researchers are turning to other ways to fight bacteria, by targeting the very weapons they deploy during an infection.