A new study suggests that a placebo effect is at play when people expect their performance to be enhanced by augmentation technologies, such as artificial intelligence (AI). The researchers found that individuals with high expectations of these technologies engage in riskier decision-making, which could be a problem as people adopt these technologies without properly understanding their benefits and limits.

Augmentation technologies boosting our physical, cognitive, or sensory performance have become commonplace. Some are so widely in use that they’ve become invisible – spellcheck, for example – and new technologies are emerging that could push our abilities beyond human limits, like exoskeletons and AI-based vision-enhancement. But the hype around these technologies also builds expectations, which could lead people to change their behaviour.

‘Individuals are more inclined to take risks when they believe they are enhanced by cutting-edge technologies like AI or brain-computer interfaces,’ says Robin Welsch, assistant professor at Aalto University. ‘This occurs even if no actual enhancement technology is involved, indicating that it’s about people’s expectations rather than any noticeable improvement. The findings also imply that a strong belief in improvement, based on a fake system, can alter decision-making.’

Don’t trust the processor

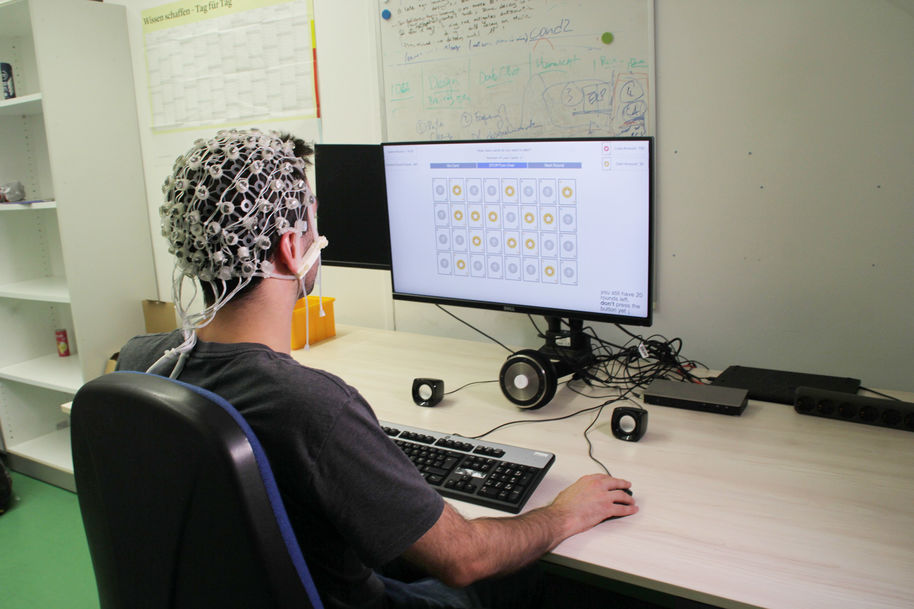

Together with colleagues at LMU Munich, HU Berlin and Aalto University, Welsch measured decision-making and risk-taking behaviour with a well-known psychological experiment, the Columbia Card Task. In the experiment, participants win or lose points by turning over cards with hidden values. The 27 participants were led to believe that an AI-controlled brain-computer interface, the placebo, would enhance their cognitive abilities by using binaural sounds to track the loss cards.

But the game was rigged – the augmentation provided no real benefit, and participants almost never encountered a loss card. Still, most of the participants thought the augmentation had helped them do better, and this made them take on greater risks. These findings show how sham cognitive enhancements can have real effects on risk-taking.

‘The hype surrounding these technologies skews people's expectations,’ says Steeven Villa, doctoral researcher at LMU Munich. ‘It can lead people to make riskier decisions and favourable user evaluations, which can have real consequences.’