Researchers propose new solutions to alleviate hostility on social media

Arguments and the echo chambers recycling strong opinions on social media are actively studied all over the world. Many researchers have suggested that inciting hatred and hostility between extreme opposites on an issue could be alleviated by wide-ranging content recommendation across hard-set divides. This way, people could be exposed to content and perspectives that differ from their own fixed opinions.

A joint research effort of Aalto University, University of Helsinki and Syracuse University challenges the approach that has attracted a great deal of attention. The researchers analysed approximately 50 000 messages from a total of ten Finnish Facebook groups, half of which had a positive stance towards immigration and the other half were strongly against it. Only two per cent of the links shared in the opposed groups were the same; consequently, the groups do not consume the same content, save only a few exceptions.

After this network analysis, they took a more detailed look at approximately 1 000 comments submitted to the few pieces of content that were shared on both sides. The qualitative analysis showed that Facebook as a platform does not encourage users to encounter others and their opinions, even though in principle it provides an arena for open debate.

‘The original content may be a neutral piece of news, but the person sharing it sets it against their own opinion. At the same time, those who disagree may be negatively labelled – and the framing steers the debate onwards. This happens equally in groups that are in favour of immigration and against it. Therefore, we do not believe that content recommendation as such can be the solution, but we need new approaches,’ says Salla-Maaria Laaksonen, researcher at the University of Helsinki.

Based on the research carried out in the field, the team have come up with new solutions to alleviate hostile echo chambers.

‘We could create platforms and groups that highlight the importance of listening and reflecting upon the opinions of others. The person expressing an opinion would then be forced to face the readers' emotional reactions. Instead of judging others, people sharing content would have the opportunity to contemplate what they have said and how it affects others,’ Aalto University researcher Matti Nelimarkka explains.

‘One possible factor explaining and intensifying the polarisation of opinions and attacks against other individuals is the circle of silence. It is created when, for example, a person in favour of immigration is afraid to discuss the possible effects that reception centres may have on communities that host them. The debate is bigoted and aggressive on both sides to such an extent that participants are afraid to voice differing opinions,’ says Nelimarkka.

Researchers are also looking into the possibility of using the social relationships of individuals in content recommendation. For example, an algorithm could recommend a discussion in which a relative or a friend is involved. A person sharing hostile or bigoted content would then be exposed to differing perceptions of people they know, and the dispute would no longer take place just between strangers.

‘Our ideas still require further development so that we can guarantee that the recommendation algorithm does inflict any harm to people’s relationships and that the algorithm can recommend mind-opening content regardless of where a person might stand on a given issue,’ Nelimarkka notes.

Research article: https://dl.acm.org/citation.cfm?id=3196764

Read more about research done on echo chambers in social media done at Aalto University:

Conflicting views on social media balanced by an algorithm

http://www.aalto.fi/en/current/news/2017-12-05-012/

2.7 billion tweets confirm: echo chambers in Twitter are very real

http://www.aalto.fi/en/current/news/2018-04-24

More information:

Matti Nelimarkka

Aalto University

matti.nelimarkka@aalto.fi

tel. +358 50 52 75 920

Read more news

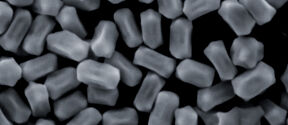

Catalysis in a new light: Microscale interactions could enhance clean energy technologies

A new study provides a more detailed view of how catalysts function during chemical reactions. The discovery could help develop more efficient materials for applications such as green hydrogen production and a more sustainable chemical industry.

Physics Days 2026 gathered Finnish physicists to Aalto

The 2026 edition of the annual conference featured talks on moiré matter, women in physics and paper cuts.

Annual review looked back on the past year

The annual review of the School of Arts, Design and Architecture provided a comprehensive overview of the past year. Members of the community were also awarded in the event.