Oulasvirta and colleagues tested an alternative, computer-assisted method that uses an algorithm to search through a design space, the set of possible solutions given multi-dimensional inputs and constraints for a particular design issue. They hypothesized that a guided approach could yield better designs by scanning a broader swath of solutions and balancing out human inexperience and design fixation.

Along with collaborators from the University of Cambridge, the researchers set out to compare the traditional and assisted approaches to design, using virtual reality as their laboratory. They employed Bayesian optimization, a machine learning technique that both explores the design space and steers towards promising solutions. ‘We put a Bayesian optimizer in the loop with a human, who would try a combination of parameters. The optimizer then suggests some other values, and they proceed in a feedback loop. This is great for designing virtual reality interaction techniques,’ explains Oulasvirta. ‘What we didn’t know until now is how the user experiences this kind of optimization-driven design approach.’

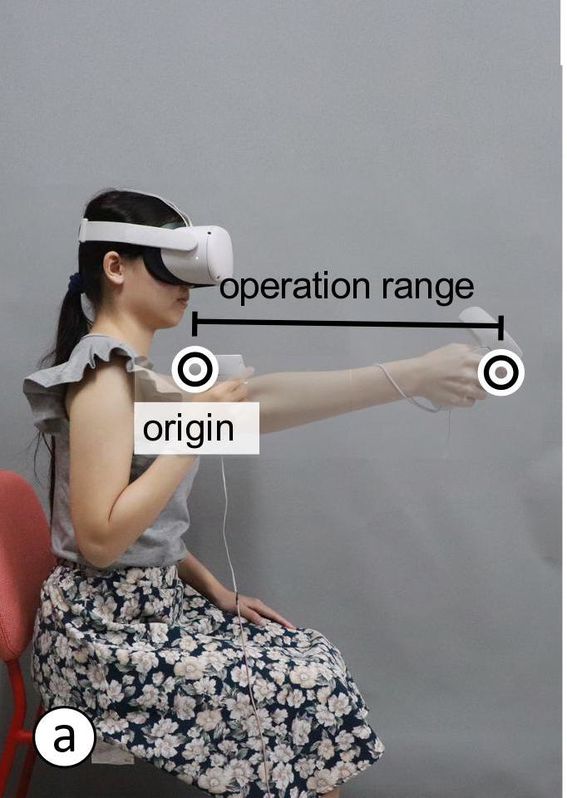

To find out, Oulasvirta’s team asked 40 novice designers to take part in their virtual reality experiment. The subjects had to find the best settings for mapping the location of their real hand holding a vibrating controller to the virtual hand seen in the headset. Half of these designers were free to follow their own instincts in the process, and the other half were given optimizer-selected designs to evaluate. Both groups had to choose three final designs that would best capture accuracy and speed in the 3D virtual reality interaction task. Finally, subjects reported how confident and satisfied they were with the experience and how in control they felt over the process and the final designs.

The results were clear-cut: ‘Objectively, the optimizer helped designers find better solutions, but designers did not like being hand-held and commanded. It destroyed their creativity and sense of agency,’ reports Oulasvirta. The optimizer-led process allowed designers to explore more of the design space compared with the manual approach, leading to more diverse design solutions. The designers who worked with the optimizer also reported less mental demand and effort in the experiment. By contrast, this group also scored lower on expressiveness, agency and ownership, compared with the designers who did the experiment without a computer assistant.

‘There is definitely a trade-off,’ says Oulasvirta. ‘With the optimizer, designers came up with better designs and covered a more extensive set of solutions with less effort. On the other hand, their creativity and sense of ownership of the outcomes was reduced.’ These results are instructive for the development of AI that assists humans in decision-making. Oulasvirta suggests that people need to be engaged in tasks such as assisted design so they retain a sense of control, don’t get bored, and receive more insight into how a Bayesian optimizer or other AI is actually working. ‘We’ve seen that inexperienced designers especially can benefit from an AI boost when engaging in our design experiment,’ says Oulasvirta. ‘Our goal is that optimization becomes truly interactive without compromising human agency.’

This paper was selected for an honourable mention at the ACM CHI Conference on Human Factors in Computing Systems in May 2022.

Reference: Chan, L., Liao, Y.C., Mo, G.B., Dudley, J.J., Cheng, C.L., Kristensson, P.O. and Oulasvirta, A., 2022. Investigating Positive and Negative Qualities of Human-in-the-Loop Optimization for Designing Interaction Techniques. doi: 10.1145/3491102.3501850